SERUM: Simple, Efficient, Robust, and Unifying Marking for Diffusion-based Image Generation

CISPA Helmholtz Center for Information Security

*Indicates Equal Contribution

AI image generators create high quality content which makes it often impossible to distinguish between real human-created vs. synthetic AI-generated content. That creates practical problems: deepfakes, copyright disputes, misinformation, and even model collapse when next generative models are trained on scraped synthetic images generated by previous models. One of the solutions to these problems is watermarking, which adds an imperceptible signal in generated content so it can later be detected algorithmically.

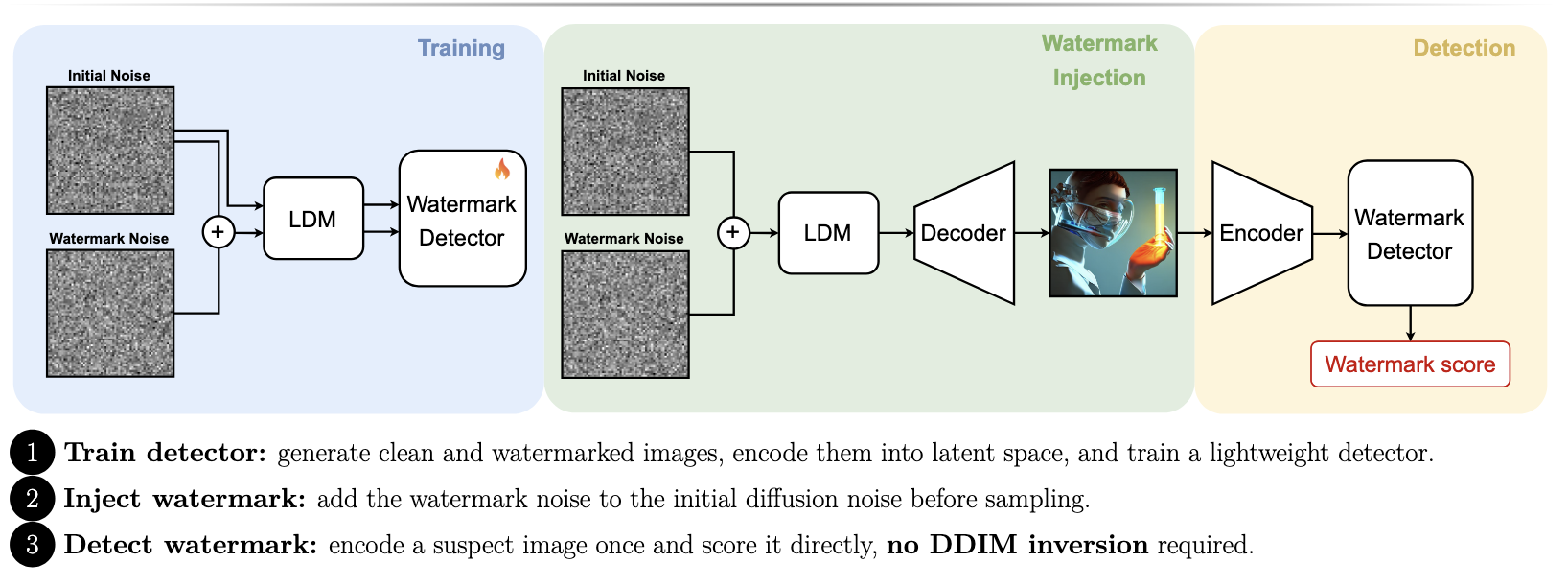

Our SERUM method proposes a simple watermarking method for diffusion models. Instead of editing the final image or fine-tuning the generator, SERUM adds a watermark noise pattern at the very start of generation and trains a lightweight detector to detect the watermark.

The problem with existing watermarks

Traditional post-processing watermarks modify the final image, often in pixel or frequency space. They are fast, but can be fragile: cropping, compression, rotation, noise, or generative “washing” attacks can remove them.

Newer diffusion-specific methods embed watermarks inside the generation process. These are usually more robust, but often come with a cost. Some require fine-tuning parts of the large generative model. Others, such as Tree-Ring, watermark the initial diffusion noise but then require expensive diffusion inversion to detect the watermark, essentially trying to reconstruct the original noise from the final image.

SERUM tries to get the best of both worlds. We add the watermark signal at the initial noise stage. We avoid diffusion inversion for speed and keep the original generator unchanged. We train only a lighweight external watermakr detector.

The core idea behind SERUM

Diffusion models start from random Gaussian noise and gradually denoise it into an image. SERUM modifies that starting noise by adding a watermark specific noise. The key intuition is simple: if the watermark is present from the very beginning, the diffusion model naturally adds its signature into the generated image. The result is a statistical fingerprint.

To detect the watermark, we do not invert the diffusion process. Instead, we encode the image back into the latent space using the diffusion model’s encoder and feed that latent into a lightweight watermark detector. The detector outputs a watermark score. To make the watmark detector robust, we train it with many image transformations, including: rotation, JPEG compression, and crop and scale, among others. We also use a dynamic augmentation sampler inspired by prioritized experience replay. This results in the training process paying more attention to transformations that currently fool the detector. This helps the detector learn robustness where it is weakest.

Experimental Results

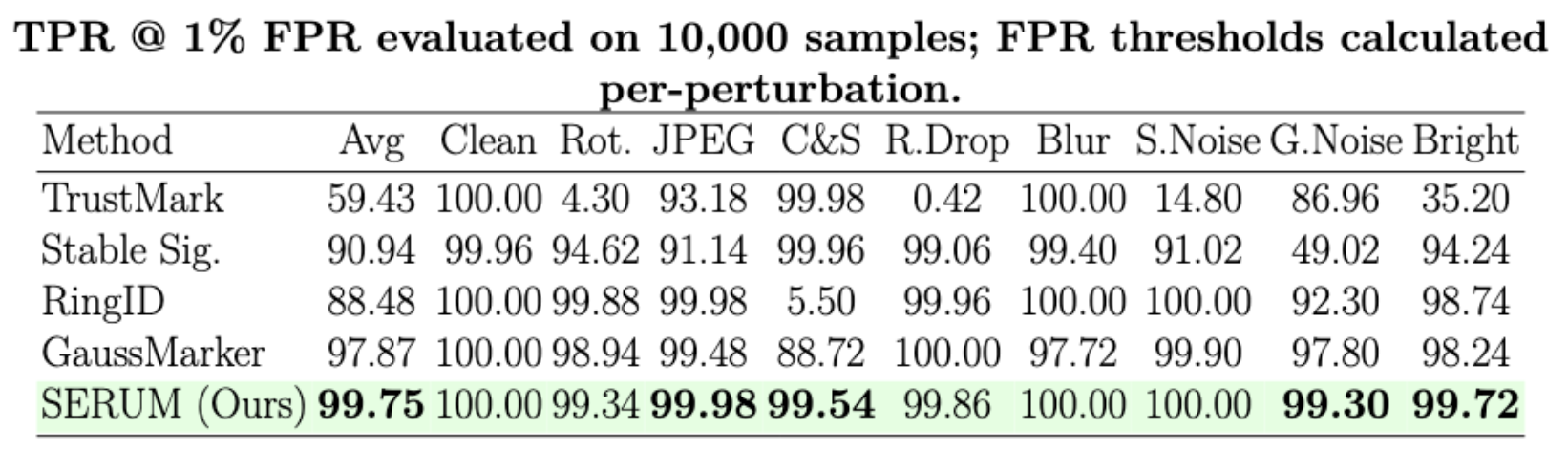

The headline result is strong: across Stable Diffusion 1.4, 2.0, and 2.1, SERUM is robust to standard perturbation. A quick note on metrics: TPR denotes how often watermarked images are correctly detected, and FPR represents how often clean images are incorrectly flagged. Our SERUM achieves above 99% TPR@1%FPR against standard perturbations, as shown below.

Compared with TrustMark, Stable Signature, RingID, and GaussMarker, SERUM is the most stable across attacks. Other methods often perform well in some cases but fail badly in others, for example, RingID collapses under crop-and-scale, while Stable Signature struggles under Gaussian noise.

SERUM also performs well against stronger watermark removal attacks based on generative models. We evaluated attacks such as VAE regeneration, diffusion regeneration, Rinse, CtrlRegen, and image-to-video transformation. SERUM reaches an average 90.87% TPR@1%FPR, outperforming RingID and GaussMarker in this benchmark. The hardest case is image-to-video, where SERUM achieves 58.20% TPR—not perfect, but still better than the compared baselines.

Quality and Efficiency

SERUM has a negligible impact on the image quality impact. FID increases slightly, meaning some small degradation or distribution shift, but CLIP score remains almost unchanged. Qualitatively, the examples preserve the main content and style, though details such as pose, shape, or perspective may change. We also provide a theoretical argument to explain the relatively good image fidelity of SERUM.

A major advantage of SERUM is speed. Because injection is just adding a fixed latent noise pattern, watermarking is essentially free. SERUM takes only 17 ms injection time for 5000 images on an NVIDIA A100 GPU. Detection takes 2.5 minutes for 5000 images. SERUM is dramatically faster than inversion-based approaches such as GaussMarker, which require almost 2 hours to detect the watermarks in the above setup. Training is also moderate: about 9.5 hours, including data generation and augmentation precomputation.

Multi-user watermarking

SERUM can also support many users. The idea is to assign each user a unique combination of noise patterns. The detector checks which pattern combination is present. We use 135 base patterns and assign pairs of them to users, supporting 9045 users. At that scale, SERUM maintains strong detection and user identification accuracy, even under many perturbations. This makes the method useful not only for was this image generated but also for which model owner, customer, or account generated the image.

Radioactivity: the watermark survives training new models

One of the most interesting findings is that SERUM is radioactive. In watermarking, radioactivity means the signal can survive when watermarked images are used to train or fine-tune another model. We fine-tune models on SERUM-watermarked images and then check whether outputs from those adapted models still contain the watemark signal.

| Adapted Model | TPR@1%FPR |

|---|---|

| SD 1.4 (LoRA) | 52.30% |

| SD 1.4 (FFT) | 77.12% |

| SANA-0.6B (FFT) | 96.76% |

This shows that SERUM enables tracing data provenance through model training pipelines, especially as synthetic data becomes more common. Caveats

Summary

SERUM’s appeal is its simplicity. Add a fixed normalized noise pattern to the initial diffusion noise, generate the image, and train a small watermark detector to recognize the resulting fingerprint. This combination gives SERUM several strengths at once: high robustness, fast injection, fast detection compared with inversion-based methods, low image-quality impact, multi-user support, and even radioactive persistence through downstream training. For watermarking diffusion-generated images, SERUM is a strong example of a method where the simplest idea may also be the most practical.

Learn More

- Read the full paper: Arxiv

- Explore the code: GitHub

- Our ICLR 2026 Poster (PDF)

Bibtex

@inproceedings{

kociszewski2026serum,

title={SERUM: Simple, Efficient, Robust, and Unifying Marking for Diffusion-based Image Generation},

author={Jan Kociszewski and Hubert Jastrz{\k{e}}bski and Tymoteusz St{\k{e}}pkowski and Filip Manijak and Krzysztof Rojek and Franziska Boenisch and Adam Dziedzic},

booktitle={The Fourteenth International Conference on Learning Representations},

year={2026},

url={https://openreview.net/forum?id=AiBUm6iKBf}

}